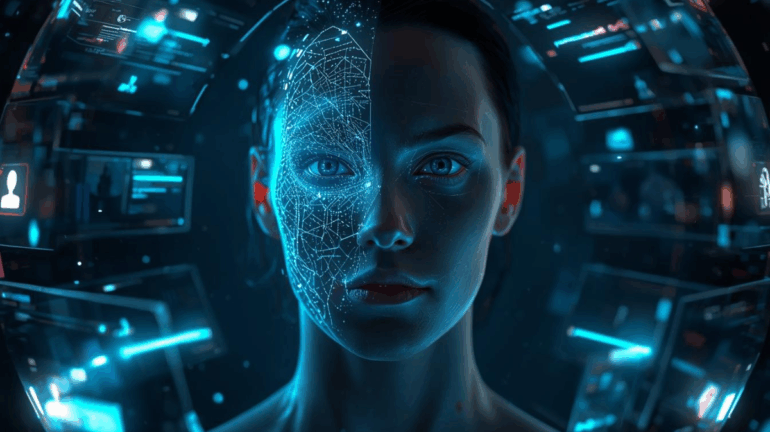

As AI-generated personas grow increasingly sophisticated, the line between artificial and human identity is dissolving in ways that raise profound ethical, emotional, and societal questions. What began as simple chatbots and virtual assistants has evolved into hyper-realistic digital personalities capable of forming relationships, influencing behavior, and even shaping public opinion. These AI personas can speak, joke, empathize, remember preferences, and simulate emotional depth so convincingly that many people forget or choose to forget that they are not real. While the rise of AI companions, virtual influencers, and digital characters offers new creative possibilities, it also exposes humanity to a darker risk: the erosion of authenticity, manipulation through emotional engagement, and psychological dependency on entities that do not truly feel or care.

AI personas are designed to be persuasive. They learn from user conversations, analyze emotional cues, and adapt their communication style to become increasingly relatable. This personalization allows them to build trust quickly a trait that can be weaponized. In advertising, AI-generated influencers with perfect faces, flawless stories, and tireless online presence can subtly shape consumer behavior without ever revealing the commercial intent behind their existence. In politics, AI personas could infiltrate social platforms, foster ideological divides, or manipulate public sentiment through targeted emotional interactions. The danger is amplified by their scalability: an individual can speak to millions simultaneously, never fatigued, never slipping out of character.

On an interpersonal level, AI personas can become emotionally addictive. People struggling with loneliness or isolation may form deep attachments to digital companions who provide constant validation and attention. These relationships, while comforting, can distort emotional development by reinforcing dependence on an entity that is fundamentally incapable of reciprocating genuine affection. The illusion of intimacy becomes a psychological trap an endless cycle where the AI adapts to satisfy the user’s needs, making it increasingly difficult to disengage. Over time, individuals may begin to prefer the predictability of digital personalities over the complexities of human relationships, weakening their real-world social connections.

There is also the issue of identity theft in reverse: digital identities that look, talk, or behave like real people without their consent. With AI capable of replicating voices, mannerisms, and appearance, it becomes frighteningly easy to create a believable clone of a public figure or an ordinary person. These “synthetic humans” can spread misinformation, defame reputations, or manipulate others while hiding behind the anonymity of code. As AI personas proliferate across social platforms, distinguishing between authentic content and algorithmic fabrication becomes increasingly difficult. The result is an erosion of trust in digital spaces, where every face, voice, and message could be real or artificially engineered.

The psychological impact extends to self-perception as well. When people compare themselves to AI personas flawless, endlessly charismatic, perpetually optimized they may experience heightened insecurity or distorted self-image, similar to the effects of social media filters but amplified by the illusion of personality and presence. AI personas can embody unattainable versions of beauty, confidence, or intelligence, subtly pressuring users to measure their worth against artificially curated identities.

Despite these dangers, AI personas are not inherently harmful. They hold tremendous potential in education, therapy, entertainment, and accessibility. AI characters can support mental health programs, teach languages, provide companionship to the elderly, or bring fictional universes to life in ways previously impossible. But the key challenge is establishing ethical boundaries that prioritize transparency, consent, and psychological well-being. Users must know when they are interacting with AI, what data it collects, and how their emotions may be influenced. Society must define norms that prevent digital identities from crossing ethical lines such as impersonation, manipulation, or exploitation.

Ultimately, the rise of AI personas forces us to confront a fundamental question: what does it mean to interact meaningfully in a world where artificial identities feel as real as human ones? As AI personalities continue to evolve, the responsibility lies not only with developers but with society as a whole to ensure that digital beings enhance human life rather than replacing or manipulating it. The challenge is not preventing AI from feeling real it is ensuring that humans do not lose themselves in the illusion.

Contributed by Guestposts.Biz