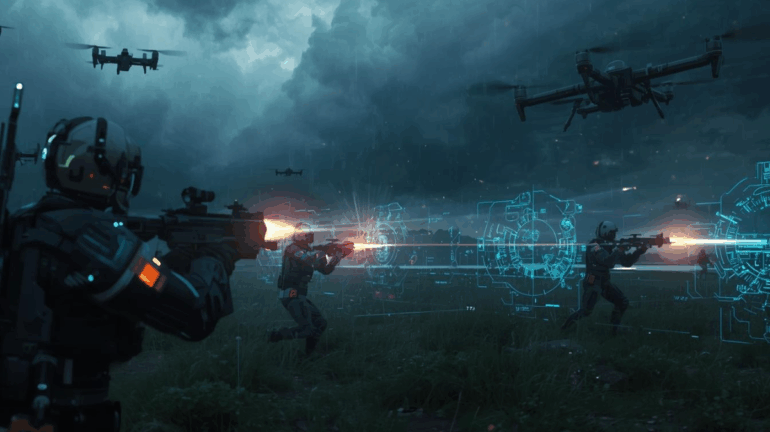

The rise of artificial intelligence in military technology is reshaping modern warfare, raising both strategic opportunities and profound ethical dilemmas. Autonomous weapons systems machines capable of selecting and engaging targets without direct human intervention are no longer theoretical. Drones, robotic sentries, and AI-assisted missile systems are increasingly deployed in defense strategies worldwide. Proponents argue that AI can reduce human casualties, improve battlefield efficiency, and execute complex operations with precision unattainable by human forces alone.

Autonomous systems can process battlefield data in real time, identify threats, and respond faster than any human, potentially preventing mistakes caused by fatigue, panic, or incomplete information. They can also be used for surveillance, reconnaissance, and logistical coordination, transforming the speed and scale of military operations. However, the ethical and humanitarian implications of AI-driven warfare are immense. Delegating life-and-death decisions to machines challenges fundamental principles of accountability, morality, and international law. Who is responsible if an autonomous weapon makes an erroneous strike that kills civilians? Can machines truly assess proportionality or context in the fog of war? These questions have sparked global debates about the necessity of “human-in-the-loop” safeguards to ensure meaningful human oversight. There are also strategic concerns. AI weapons could escalate conflicts by enabling faster, more unpredictable strikes, potentially reducing the window for diplomacy. Cybersecurity risks compound the danger: hacked autonomous systems could be turned against their operators, creating unprecedented vulnerabilities. International organizations and experts have called for treaties to regulate or ban certain autonomous weapon systems, but progress has been slow as nations race to achieve military advantage.

Beyond the battlefield, AI is transforming defense planning and intelligence. Machine learning algorithms analyze satellite imagery, communications, and social media to predict conflicts, identify supply chains, and monitor adversaries. Simulations driven by AI allow commanders to model multiple scenarios, optimize strategies, and reduce uncertainty in decision-making. While these capabilities enhance national security, they also centralize power and raise questions about transparency and bias. Ultimately, AI in warfare presents a paradox: it can make military operations safer, more precise, and more effective, yet its misuse could lead to catastrophic ethical and geopolitical consequences. The challenge lies in balancing technological advantage with human responsibility, ensuring that the deployment of AI in combat adheres to humanitarian principles and legal norms. As AI continues to evolve, it will not merely assist in warfare it will define it.

The future may see hybrid systems where humans and machines co-command, sharing cognition, strategy, and judgment. Yet, society must confront difficult moral questions today: what rules govern autonomous life-and-death decisions, and how do we preserve accountability in a world where machines can act faster than humans can respond? The answers will shape the future of global security and the ethical framework of war in an age dominated by intelligent machines.

Contributed By Guestposts.Biz