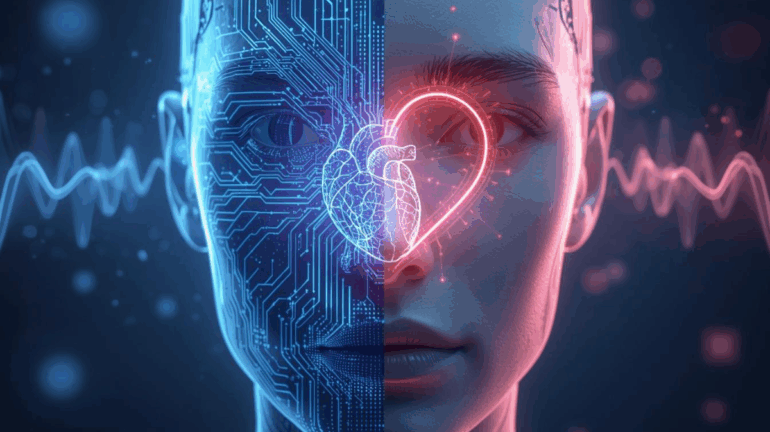

Artificial intelligence is advancing beyond logic and computation it is now learning to understand, interpret, and even emulate human emotion. This next frontier, known as emotional AI or affective computing, is reshaping the way machines interact with people, bringing empathy into algorithms and redefining the relationship between humans and technology. The ability to recognize emotions through voice tone, facial expression, text sentiment, or physiological data is allowing AI to build more human-like connections. In sectors like healthcare, education, marketing, and customer service, emotional intelligence enables machines to respond not just with facts, but with feeling. Imagine a mental health chatbot that detects distress in a user’s voice and adjusts its tone to offer comfort, or an AI tutor that recognizes student frustration and rephrases lessons with encouragement. Emotional AI bridges the gap between computation and compassion.

Behind the scenes, the technology relies on a fusion of psychology, neuroscience, and deep learning. Advanced models analyze voice inflection, micro-expressions, heart rate, and linguistic patterns to detect emotional states. Machine learning then maps these signals into patterns of empathy, enabling responses that simulate understanding. While early AI systems were emotionless and mechanical, today’s emotional models are dynamic, self-adapting, and context-aware capable of differentiating between sarcasm, joy, anxiety, or fatigue. Major companies like Microsoft, IBM, and Affectiva are already deploying emotional AI to improve user experience, while automotive firms integrate it into driver-monitoring systems to detect distraction or drowsiness. In workplaces, emotionally intelligent AI assistants help manage stress and improve communication, and in healthcare, they support patient therapy with empathetic dialogue.

But this evolution raises profound ethical and philosophical questions. Can a machine truly feel empathy, or is it simply mimicking it? Is simulated emotion as meaningful as genuine compassion? Emotional AI lacks consciousness it does not feel joy or sorrow; it only recognizes and predicts them. Yet, its ability to reproduce the effects of empathy can be powerful and healing in human contexts. However, the darker side of emotional AI lies in manipulation: if technology can read our feelings, it can also influence them. Marketing algorithms may exploit emotional triggers, governments could use surveillance to predict dissent, and personal privacy could vanish as AI learns to read not just our data but our moods. Regulating this field becomes critical to ensure emotional AI enhances wellbeing rather than exploiting vulnerability.

As emotional AI continues to mature, one of the most fascinating frontiers is cross-cultural empathy. Emotions may be universal, but their expression varies across societies what signals politeness in one culture may signify discomfort in another. Training AI systems to navigate these subtleties requires massive datasets from diverse populations, as well as cultural calibration to prevent bias. Affective computing researchers are now developing global emotion datasets that teach AI to interpret nuanced expressions and context-driven emotional cues. This is especially vital for applications like global customer support, virtual therapy, and AI-driven diplomacy.

Moreover, the integration of emotional AI with emerging technologies like the metaverse and social robots opens new possibilities for companionship and collaboration. Imagine robots that sense loneliness and respond with comforting dialogue, or virtual assistants in immersive worlds that adapt their tone based on the user’s emotional state. Emotional AI may also play a pivotal role in education, where emotionally responsive tutors can help children build resilience, confidence, and curiosity. Ultimately, teaching machines to “feel” isn’t about replacing human emotion it’s about amplifying empathy through technology, allowing AI to bridge gaps in understanding and enhance the emotional fabric of digital communication.

As we move forward, the challenge lies in designing systems that reflect ethical empathy emotional understanding that respects autonomy and privacy. Researchers envision “ethical emotion models” that incorporate moral reasoning and consent-based emotional analysis. Some futurists predict AI will soon develop a kind of synthetic empathy, capable of both rational and emotional responses that feel authentic to users. Whether this constitutes true emotion or sophisticated imitation may matter less than its impact on human life. Emotional AI could transform how we connect turning machines from tools into companions, from responders into listeners. The next generation of intelligent systems will not just process commands but perceive the emotional context behind them, creating a world where technology doesn’t just think it feels with us.

contributed by guestpost.biz